by (Technology Review): This list marks 20 years since we began compiling an annual selection of the year’s most important technologies…

Some, such as mRNA vaccines, are already changing our lives, while others are still a few years off. Below, you’ll find a brief description along with a link to a feature article that probes each technology in detail. We hope you’ll enjoy and explore—taken together, we believe this list represents a glimpse into our collective future.

Messenger RNA vaccines

We got very lucky. The two most effective vaccines against the coronavirus are based on messenger RNA, a technology that has been in the works for 20 years. When the covid-19 pandemic began last January, scientists at several biotech companies were quick to turn to mRNA as a way to create potential vaccines; in late December 2020, at a time when more than 1.5 million had died from covid-19 worldwide, the vaccines were approved in the US, marking the beginning of the end of the pandemic.

The new covid vaccines are based on a technology never before used in therapeutics, and it could transform medicine, leading to vaccines against various infectious diseases, including malaria. And if this coronavirus keeps mutating, mRNA vaccines can be easily and quickly modified. Messenger RNA also holds great promise as the basis for cheap gene fixes to sickle-cell disease and HIV. Also in the works: using mRNA to help the body fight off cancers. Antonio Regalado explains the history and medical potential of the exciting new science of messenger RNA.

GPT-3

Large natural-language computer models that learn to write and speak are a big step toward AI that can better understand and interact with the world. GPT-3 is by far the largest—and most literate—to date. Trained on the text of thousands of books and most of the internet, GPT-3 can mimic human-written text with uncanny—and at times bizarre—realism, making it the most impressive language model yet produced using machine learning.

But GPT-3 doesn’t understand what it’s writing, so sometimes the results are garbled and nonsensical. It takes an enormous amount of computation power, data, and money to train, creating a large carbon footprint and restricting the development of similar models to those labs with extraordinary resources. And since it is trained on text from the internet, which is filled with misinformation and prejudice, it often produces similarly biased passages. Will Douglas Heaven shows off a sample of GPT-3’s clever writing and explains why some are ambivalent about its achievements.

TikTok recommendation algorithms

Since its launch in China in 2016, TikTok has become one of the world’s fastest-growing social networks. It’s been downloaded billions of times and attracted hundreds of millions of users. Why? Because the algorithms that power TikTok’s “For You” feed have changed the way people become famous online.

While other platforms are geared more toward highlighting content with mass appeal, TikTok’s algorithms seem just as likely to pluck a new creator out of obscurity as they are to feature a known star. And they’re particularly adept at feeding relevant content to niche communities of users who share a particular interest or identity.

The ability of new creators to get a lot of views very quickly—and the ease with which users can discover so many kinds of content—have contributed to the app’s stunning growth. Other social media companies are now scrambling to reproduce these features on their own apps. Abby Ohlheiser profiles a TikTok creator who was surprised by her own success on the platform.

Lithium-metal batteries

Electric vehicles come with a tough sales pitch; they’re relatively expensive, and you can drive them only a few hundred miles before they need to recharge—which takes far longer than stopping for gas. All these drawbacks have to do with the limitations of lithium-ion batteries. A well-funded Silicon Valley startup now says it has a battery that will make electric vehicles far more palatable for the mass consumer.

It’s called a lithium-metal battery and is being developed by QuantumScape. According to early test results, the battery could boost the range of an EV by 80% and can be rapidly recharged. The startup has a deal with VW, which says it will be selling EVs with the new type of battery by 2025.

The battery is still just a prototype that’s much smaller than one needed for a car. But if QuantumScape and others working on lithium-metal batteries succeed, it could finally make EVs attractive to millions of consumers. James Temple describes how a lithium-metal battery works, and why scientists are so excited by recent results.

Advertisement

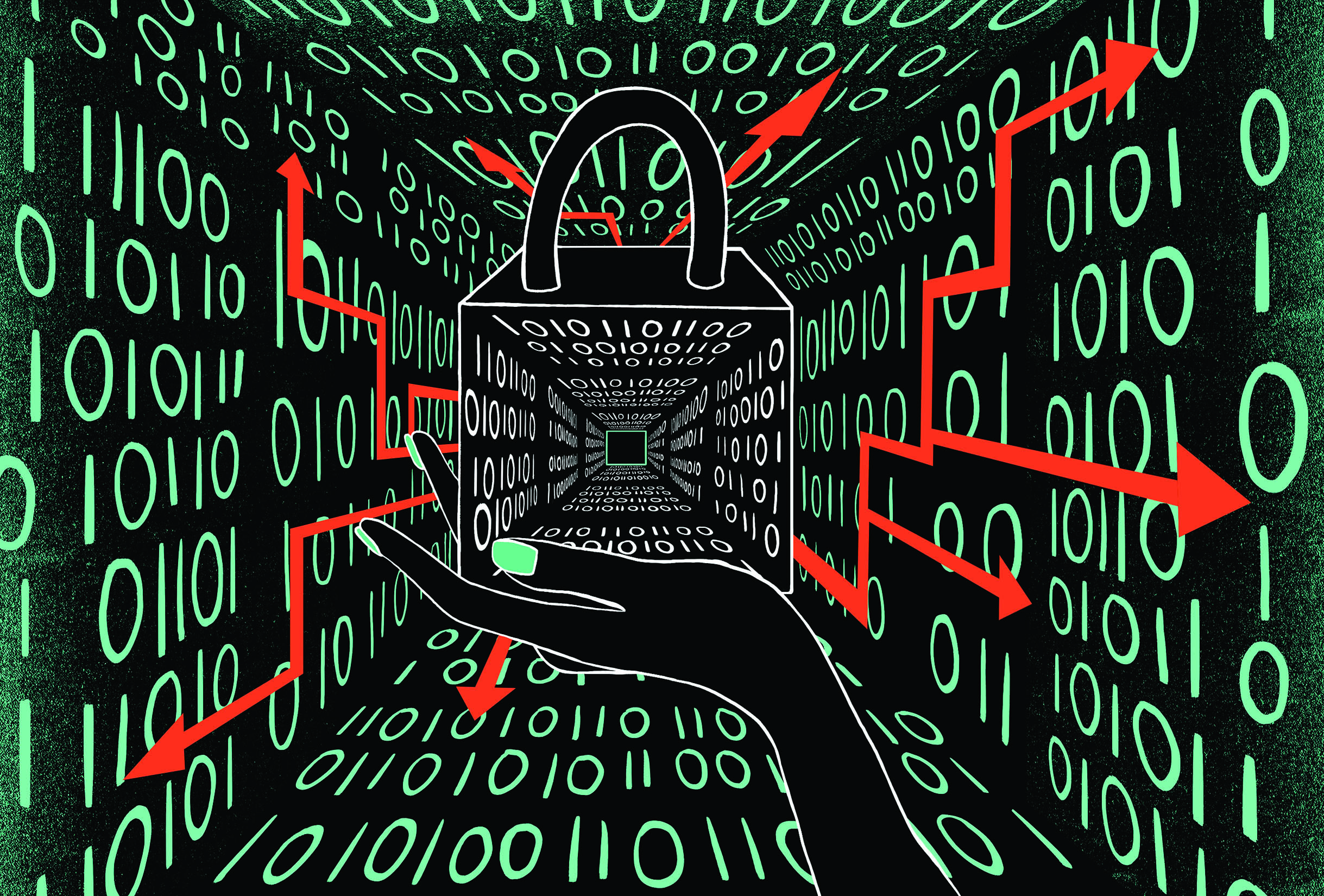

Data trusts

Technology companies have proven to be poor stewards of our personal data. Our information has been leaked, hacked, and sold and resold more times than most of us can count. Maybe the problem isn’t with us, but with the model of privacy to which we’ve long adhered—one in which we, as individuals, are primarily responsible for managing and protecting our own privacy.

Data trusts offer one alternative approach that some governments are starting to explore. A data trust is a legal entity that collects and manages people’s personal data on their behalf. Though the structure and function of these trusts are still being defined, and many questions remain, data trusts are notable for offering a potential solution to long-standing problems in privacy and security. Anouk Ruhaak describes the powerful potential of this model and a few early examples that show its promise.

Green hydrogen

Hydrogen has always been an intriguing possible replacement for fossil fuels. It burns cleanly, emitting no carbon dioxide; it’s energy dense, so it’s a good way to store power from on-and-off renewable sources; and you can make liquid synthetic fuels that are drop-in replacements for gasoline or diesel. But most hydrogen up to now has been made from natural gas; the process is dirty and energy intensive.

The rapidly dropping cost of solar and wind power means green hydrogen is now cheap enough to be practical. Simply zap water with electricity, and presto, you’ve got hydrogen. Europe is leading the way, beginning to build the needed infrastructure. Peter Fairley argues that such projects are just a first step to an envisioned global network of electrolysis plants that run on solar and wind power, churning out clean hydrogen.

Digital contact tracing

As the coronavirus began to spread around the world, it felt at first as if digital contact tracing might help us. Smartphone apps could use GPS or Bluetooth to create a log of people who had recently crossed paths. If one of them later tested positive for covid, that person could enter the result into the app, and it would alert others who might have been exposed.

But digital contact tracing largely failed to make much impact on the virus’s spread. Apple and Google quickly pushed out features like exposure notifications to many smartphones, but public health officials struggled to persuade residents to use them. The lessons we learn from this pandemic could not only help us prepare for the next pandemic but also carry over to other areas of health care. Lindsay Muscato explores why digital contact tracing failed to slow covid-19 and offers ways we can do better next time.

Hyper-accurate positioning

We all use GPS every day; it has transformed our lives and many of our businesses. But while today’s GPS is accurate to within 5 to 10 meters, new hyper-accurate positioning technologies have accuracies within a few centimeters or millimeters. That’s opening up new possibilities, from landslide warnings to delivery robots and self-driving cars that can safely navigate streets.

China’s BeiDou (Big Dipper) global navigation system was completed in June 2020 and is part of what’s making all this possible. It provides positioning accuracy of 1.5 to two meters to anyone in the world. Using ground-based augmentation, it can get down to millimeter-level accuracy. Meanwhile, GPS, which has been around since the early 1990s, is getting an upgrade: four new satellites for GPS III launched in November and more are expected in orbit by 2023. Ling Xin reports on how the greatly increased accuracy of these systems is already proving useful.

Remote everything

The covid pandemic forced the world to go remote. Getting that shift right has been especially critical in health care and education. Some places around the world have done a particularly good job at getting remote services in these two areas to work well for people.

Snapask, an online tutoring company, has more than 3.5 million users in nine Asian countries, and Byju’s, a learning app based in India, has seen the number of its users soar to nearly 70 million. Unfortunately, students in many other countries are still floundering with their online classes.

Meanwhile, telehealth efforts in Uganda and several other African countries have extended health care to millions during the pandemic. In a part of the world with a chronic lack of doctors, remote health care has been a life saver. Sandy Ong reports on the remarkable success of online learning in Asia and the spread of telemedicine in Africa.

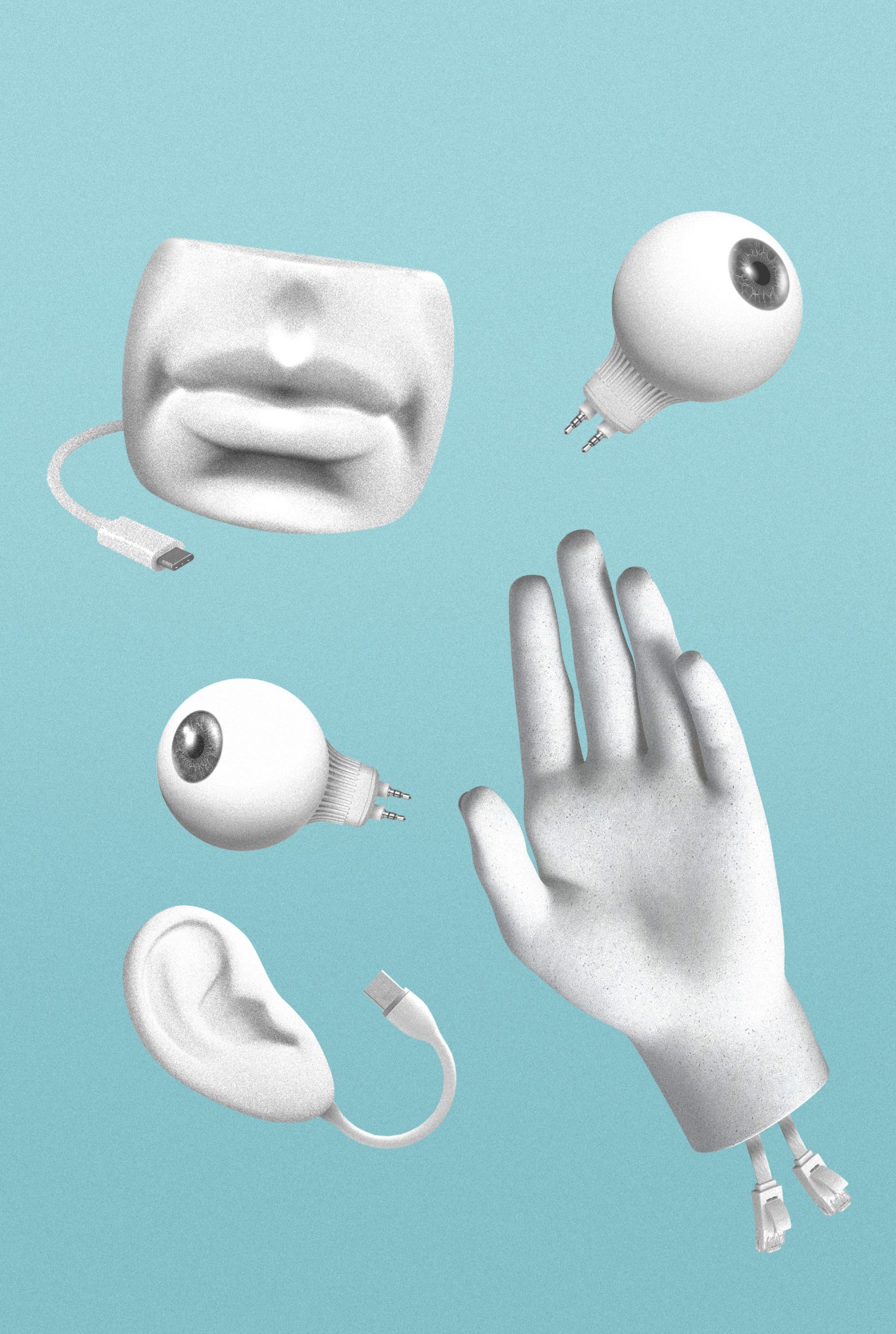

Multi-skilled AI

Despite the immense progress in artificial intelligence in recent years, AI and robots are still dumb in many ways, especially when it comes to solving new problems or navigating unfamiliar environments. They lack the human ability, found even in young children, to learn how the world works and apply that general knowledge to new situations.

One promising approach to improving the skills of AI is to expand its senses; currently AI with computer vision or audio recognition can sense things but cannot “talk” about what it sees and hears using natural-language algorithms. But what if you combined these abilities in a single AI system? Might these systems begin to gain human-like intelligence? Might a robot that can see, feel, hear, and communicate be a more productive human assistant? Karen Hao explains how AIs with multiple senses will gain a greater understanding of the world around them, achieving a much more flexible intelligence.